Additional material: Convexity of the Salpeter problem¶

The Salpeter problem is neither a linear nor a least-squares problem. Let us check if it is a convex problem!

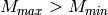

For Hamiltonian Monte-Carlo, we have already computed the gradient of the log-likelihood, which is our objective function:

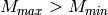

As we only have a single fit parameter -  - the Hessian is a 1x1 matrix and its single eigenvalue is:

- the Hessian is a 1x1 matrix and its single eigenvalue is:

If the Salpeter problem is convex, this eigenvalue has to be negative. Is it negative?

By definition, we have  and

and  .

.

Therefore, as long as  , the first two terms in brackets are always strictly positive.

, the first two terms in brackets are always strictly positive.

For  , also the third term is strictly positive because

, also the third term is strictly positive because  .

.

For  , the third term may in fact become negative. However, for

, the third term may in fact become negative. However, for  and

and  , the eigenvalue is still strictly negative at least until

, the eigenvalue is still strictly negative at least until  .

.

We conclude that for  and

and  and for all

and for all  and

and  the eigenvalue

the eigenvalue  of the Hessian is always strictly negative. In other words, in our case the Salpeter problem is convex, i.e., the log-likelihood function has only a single maximum which is therefore the global maximum.

of the Hessian is always strictly negative. In other words, in our case the Salpeter problem is convex, i.e., the log-likelihood function has only a single maximum which is therefore the global maximum.

Obviously, we could simply use a gradient method - ideally Newton’s method (we already have computed gradient and Hessian!) - in order to find the maximum quickly. Nevertheless, the Monte-Carlo methods also directly provide us with uncertainty estimates.

This is a very rare example, where a non-trivial problem can be directly tested for convexity.

![\frac{\partial\log\mathcal L}{\partial\alpha} = -D-\frac{N}{1-\alpha}\left[1 + \frac{1-\alpha}{M_{max}^{1-\alpha}-M_{min}^{1-\alpha}}\left(M_{min}^{1-\alpha}\log M_{min}-M_{max}^{1-\alpha}\log M_{max}\right)\right]](../_images/math/fc924d6fcc5cbdaf9cfbd3458de18460079ccae4.png)

![\frac{\partial^2\log\mathcal L}{\partial\alpha^2} = -N\left[\frac{1}{(1-\alpha)^2} + \left(\frac{M_{min}^{1-\alpha}\log M_{min}-M_{max}^{1-\alpha}\log M_{max}}{M_{max}^{1-\alpha}-M_{min}^{1-\alpha}}\right)^2 + \frac{M_{max}^{1-\alpha}\log^2 M_{max}-M_{min}^{1-\alpha}\log^2 M_{min}}{M_{max}^{1-\alpha}-M_{min}^{1-\alpha}}\right]](../_images/math/adf43d422435dc0d18f5bc5ae39b4eccea309e9f.png)